LETS PARSE DDP

Back again with yet another odd protocol to dive into. This time around we’re tackling a pixel protocol named DDP. DDP is another small homebrew protocol that aims to squeeze in as much pixel data as possible into one ethernet frame (1500 bytes) in order to maximize transmission efficiency over local networks. When you’re driving large scale LED installations, the overhead starts mattering as you have to introduce other complexity to deal with it (TCP, packet protocols etc). Especially in applications where lost data matters less than latency and throughput.

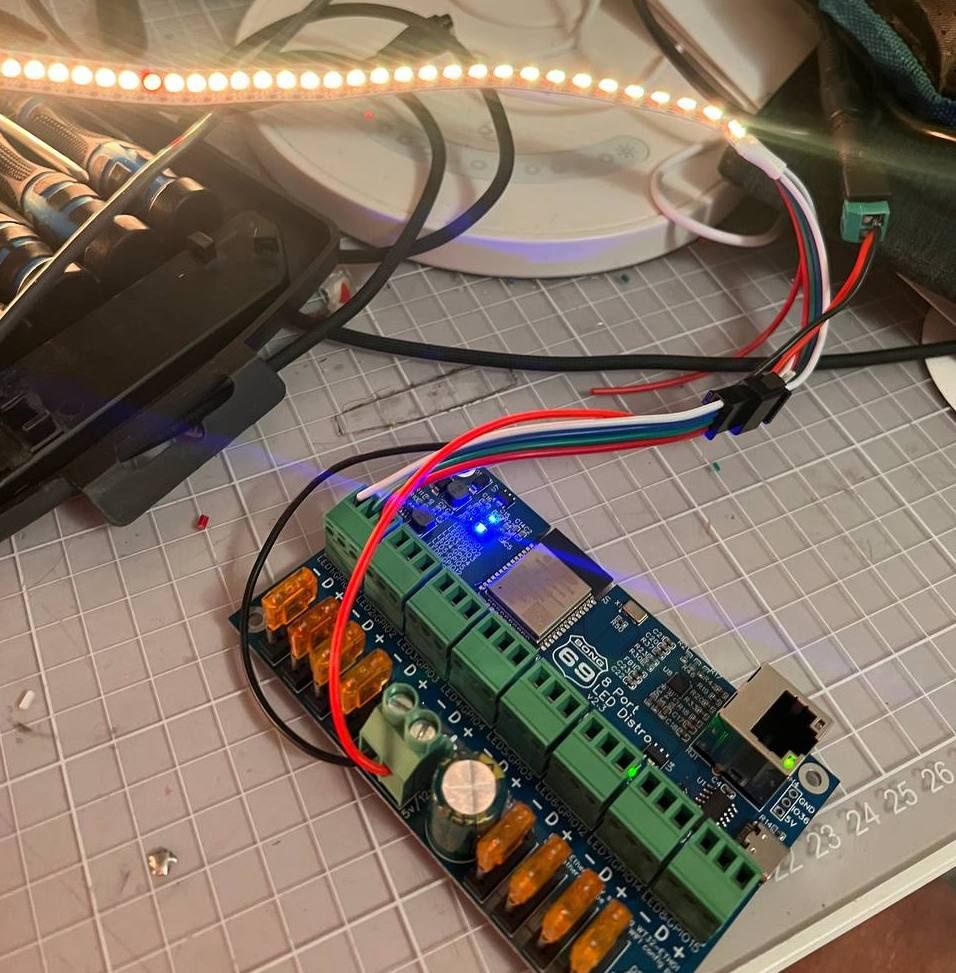

I ended up in this hole as I purchased the 8 Port LED Distro by Bong69 (what a name) which is a small ESP32 controller wired up with an ethernet port, level shifters, fuses and 8 output connectors for driving LED strips like WS2811/WS2812. The controller runs a piece of software called WLED, an open source project implementing a wide variety of different features to drive everything from holiday lights to installations. Reality is I just wanted a simple controller that solved the output problem so that I could stream pixel data to over wired ethernet. The alternative was that I would write software for a Teensy but given that life has changed to a degree, time is a precious resource these days.

WLED supports E1.13, ArtNet, DDP and tpm2.net and their own UDP realtime protocol. ArtNet and E1.31 are originally protocols to control light fixtures and suffers from those compromises (maximum 40FPS framerate for DMX compatibility, multicast) which ruled them out. I started out implementing tpm2.net but quickly realized that the only real user of this protocol seemed to be a German bespoke LED controller manufacturer. That left DDP as the preferred protocol to pursue.

DDP is defined by 3waylabs and seems to be their preferred protocol for their art installations. The webpage has some mentions of burning man so going to assume it’s yet another burning man LED installation developer. What I liked about DDP is that it’s extremely smart in how it packs the metadata into the bitstream. DDP makes use of every available bit, which yields a header no larger than 10 bytes. Compare this to E1.31 where the header is 126 bytes. Modern computers don’t care but for a small microcontroller parsing data, this ends up having an impact on the amount of frames per second you can push. DDP sits in the middle here as “sane” compromise. It doesn’t mandate a framerate, it’s spec agnostic to if you send it over UDP or TCP (although I suspect most vendors only accept UDP) and it’s open ended in that it relies on JSON for messaging. Only drawback is that clients needs to implement JSON parsing if they want to be smart but that’s tablestakes at this point for anything connected.

Implementing DDP

I was set on trying leveraging Copilot and ChatGPT this time around to save time and bonged out a go implementation in an evening. Coming back to Go always makes me realize how good Rust is. If you took Go, added the Result type and enum pattern matching from Rust you would have the perfect language but sadly we’re bound by the C conventions imposed by Rob Pike and friends. After verifying that the Go implementation sent the right bytes, I hooked up the WLED controller which of course did not work. Turns out that WLED wants to open a return connection to the incoming address for it to accept DDP.

Having a working Go implementation helped in thinking about how to design the Rust version of this. With Go I took some architectural liberties that are forbidden in Rust. On top of that, the Go implementation only implements the subset that allows you to send pixels, not control the display. I took the same approach here, using ChatGPT to assist but it quickly turned out that I had to do a lot of manual work as ChatGPT struggled to understand the documentation. The documentation relies a lot on formatting to convey the spec and ChatGPT didn’t see that nuance.

After another couple of days of bashing, ddp-rs was now working. Since I cared a bit more about implementing the entire spec this time around, I wrote some more serious tests to both encode and parse DDP. To test my library, I used WLEDs “output mode” in which it can spit out a DDP stream and this is where it started getting weird. My test failed on parsing the bits per pixel from WLED, being offset by 2. As always when programming, I assumed it was my fault and started digging. After going back and forth on the spec for hours I could not understand how the value WLED was sending would be correct at which point it was time to jump into the WLED source code.

After digging a bit I was convinced that WLED had implemented it wrong. WLED just hard sets these values in config instead of calculating them (nothing wrong with this, faster for their use case) and the values were wrong, but why? I opened a pull request with changes and one of the maintainers immediately asked me to fix it upstream. After reviewing the upstream repo, it turns out that the WLED developers hacked DDP support on themselves and DDP isn’t in the upstream repo. The maintainer shares that the spec they implemented a while back was different. I used the waybackmachine and it turns out they were right, the spec actually has changed over time. At this point, the only way to clear this out is to email the person who wrote the spec and ask them what’s the actual story behind this value. I emailed and got an answer quickly and it turns out that this field was initially “to be defined later” but a lot of people started wanting RGBW support in WLED, which pushed the author of the protocol to implement something quick that later changed. WLED was still on the “initial” implementation. After that, the pull request was accepted and I could return back to sanity.

What did we learn?

- Please version your protocol and provide a historical list of the changes.

- I am now firmly in the camp of “Go is not great”. Fantastic runtime, harsh language.

- ChatGPT is amazing, you already knew that though.

Long story short, I implemented DDP for Rust. In case you ever need it, enjoy.